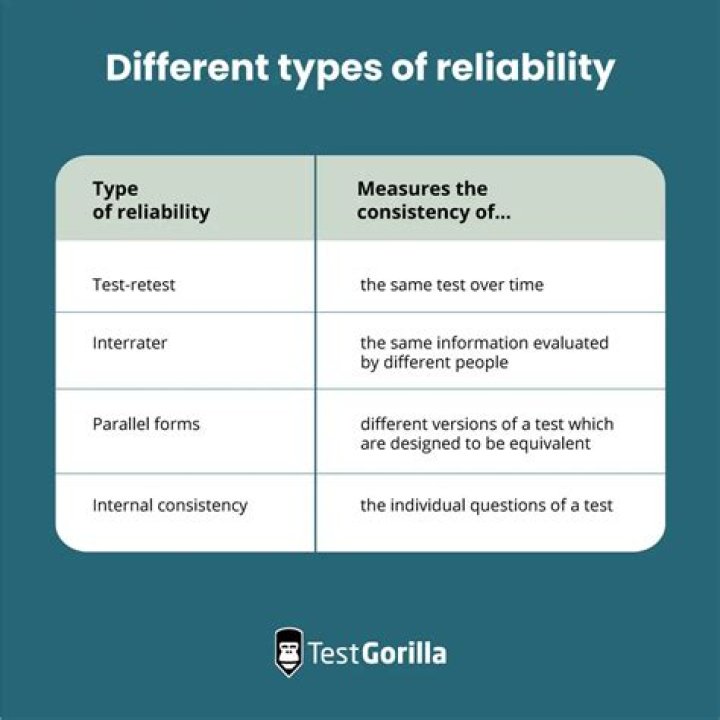

Here are the four most common ways of measuring reliability for any empirical method or metric:

- inter-rater reliability.

- test-retest reliability.

- parallel forms reliability.

- internal consistency reliability.

Can a measure be valid without being reliable?

A measure can be reliable but not valid, if it is measuring something very consistently but is consistently measuring the wrong construct. Likewise, a measure can be valid but not reliable if it is measuring the right construct, but not doing so in a consistent manner.

What is being measured in reliability test?

Test reliability. Reliability refers to how dependably or consistently a test measures a characteristic. If a person takes the test again, will he or she get a similar test score, or a much different score? A test that yields similar scores for a person who repeats the test is said to measure a characteristic reliably.

How is validity and reliability measured?

Reliable measures are those with low random (chance) errors. Reliability is assessed by one of four methods: retest, alternative-form test, split-halves test, or internal consistency test. Validity is measuring what is intended to be measured. Valid measures are those with low nonrandom (systematic) errors.

What does it mean that reliability is necessary but not sufficient for validity?

Reliability is necessary but not sufficient for validity. If you used a normal, non-broken set of scales to measure your height it would give you the same score, and so be reliable (assuming your weight doesn’t fluctuate), but still wouldn’t be valid.

What’s the difference between reliability and validity?

Reliability and validity are both about how well a method measures something: Reliability refers to the consistency of a measure (whether the results can be reproduced under the same conditions). Validity refers to the accuracy of a measure (whether the results really do represent what they are supposed to measure).

How do you test for validity?

Test validity can itself be tested/validated using tests of inter-rater reliability, intra-rater reliability, repeatability (test-retest reliability), and other traits, usually via multiple runs of the test whose results are compared.

How do you calculate percentage reliability?

Inter-Rater Reliability Methods

- Count the number of ratings in agreement. In the above table, that’s 3.

- Count the total number of ratings. For this example, that’s 5.

- Divide the total by the number in agreement to get a fraction: 3/5.

- Convert to a percentage: 3/5 = 60%.

What are the 4 types of reliability?

There are four main types of reliability….Table of contents

- Test-retest reliability.

- Interrater reliability.

- Parallel forms reliability.

- Internal consistency.

- Which type of reliability applies to my research?

What are the factors affecting validity?

Here are seven important factors affect external validity:

- Population characteristics (subjects)

- Interaction of subject selection and research.

- Descriptive explicitness of the independent variable.

- The effect of the research environment.

- Researcher or experimenter effects.

- Data collection methodology.

- The effect of time.

What are the three types of reliability?

Reliability refers to the consistency of a measure. Psychologists consider three types of consistency: over time (test-retest reliability), across items (internal consistency), and across different researchers (inter-rater reliability).

Why reliable test is not always valid?

In order to ensure reliability, the degree of variation must be small. Test-Retest Reliability – is established by comparing scores of the same individual, to calculate a correlation. There must be a strong correlation to ensure reliability.

What is the difference between reliability and validity?

How do you know if research is reliable?

8 ways to determine the credibility of research reports

- Why was the study undertaken?

- Who conducted the study?

- Who funded the research?

- How was the data collected?

- Is the sample size and response rate sufficient?

- Does the research make use of secondary data?

- Does the research measure what it claims to measure?

What is an example of validity?

Validity refers to how well a test measures what it is purported to measure. For a test to be reliable, it also needs to be valid. For example, if your scale is off by 5 lbs, it reads your weight every day with an excess of 5lbs.

These four methods are the most common ways of measuring reliability for any empirical method or metric.

- Inter-Rater Reliability.

- Test-Retest Reliability.

- Parallel Forms Reliability.

- Internal Consistency Reliability.

How do you know if a measure is reliable?

Reliability refers to how consistently a method measures something. If the same result can be consistently achieved by using the same methods under the same circumstances, the measurement is considered reliable.

Which is an example of reliability in a measure?

Reliability is a measure of the stability or consistency of test scores. You can also think of it as the ability for a test or research findings to be repeatable. For example, a medical thermometer is a reliable tool that would measure the correct temperature each time it is used.

What is a perfectly reliable measure?

A measure that has no random error is perfectly reliable; a measure that has no true score (i.e., is all random error) has zero reliability. var(X) = var(T) + var(e) This means that the variability of your measure is the sum of the variability due to true score and the variability due to random error.

What makes good internal validity?

Internal validity is the extent to which a study establishes a trustworthy cause-and-effect relationship between a treatment and an outcome. The less chance there is for “confounding” in a study, the higher the internal validity and the more confident we can be in the findings.

Does random error affect validity?

In order to determine if your measurements are reliable and valid, you must look for sources of error. There are two types of errors that may affect your measurement, random and nonrandom. Random error consists of chance factors that affect the measurement. The more random error, the less reliable the instrument.

Which is not a reliable measure of how well a company?

The company’s development of human capital, organizational capital, and information capital. Changes in the firm’s image and reputation with its customers. The company’s overall financial strength. Evidence of improvement in internal processes such as defect rate, order fulfillment, and employee productivity.

Can a measurement be reliable without being valid?

A measurement can be reliable without being valid. However, if a measurement is valid, it is usually also reliable.

Which is the most reliable measure of reliability?

Test-retest reliability . Test-retest reliability is a measure of consistency between two measurements (tests) of the same construct administered to the same sample at two different points in time. If the observations have not changed substantially between the two tests, then the measure is reliable.

Which is an example of an unreliable measurement?

In other words, if we use this scale to measure the same construct multiple times, do we get pretty much the same result every time, assuming the underlying phenomenon is not changing? An example of an unreliable measurement is people guessing your weight.